Advertisement|Remove ads.

US Drafts AI Policy Requiring Firms To Grant ‘Irrevocable’ Government Access

- The Trump administration drafted new AI rules that required companies to allow “any lawful” use of their models for US government contracts.

- The move followed the Pentagon labelling Anthropic a “supply-chain risk” and banning contractors from using its AI technology.

- Officials warned that restricting access to AI systems during a crisis could pose national security risks, fuelling the push for tighter rules.

The Trump administration has reportedly mandated that AI firms allow the government to use their models for lawful purposes.

The United States created new artificial intelligence guidelines in response to its fight with Anthropic, which required AI businesses to allow the government to use their models for authorized purposes, according to a Financial Times report.

The draft guidelines, reviewed by the Financial Times, prepared by the U.S. General Services Administration (GSA), explained that AI companies seeking government contracts must grant the U.S. an “irrevocable license” to use their systems. The guidance would apply to civilian contracts and strengthen the government’s approach to accessing AI services.

The draft also stated that contractors had to ensure that their AI systems did not intentionally have partisan or ideological judgments in their outputs. In addition, companies had to disclose whether their models had been modified or configured to comply with any non-U.S. government or commercial regulatory frameworks, the report said.

The developments followed a clash between the Department of War and Anthropic over how the company’s models could be used in military applications. The Pentagon, which is the headquarters of the US’s Defense Department, had sought operational access to Anthropic’s AI systems, but the company refused to provide complete access.

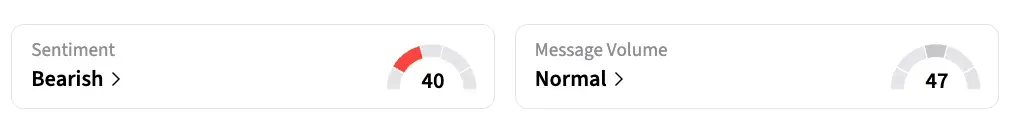

On Stocktwits, retail sentiment around Anthropic remained in the 'bearish' zone, accompanied by 'normal' chatter levels over the past day.

Pentagon Clash With Anthropic

The Pentagon and AI company Anthropic are currently at odds over how the U.S. military can use Anthropic's AI system, Claude. Anthropic wouldn't take away the protections that keep its technology from being used for fully autonomous weapons or mass domestic surveillance, saying that these uses are unsafe and wrong. The Pentagon then canceled its contract with the company, citing it as a possible "supply chain risk," which meant the company couldn't work on defense projects anymore. Anthropic has said it will take legal action over this decision.

Following this, the Department of Defense formally designated Anthropic a “supply-chain risk” on Thursday.

US Department Of War Official Flags National Security Concern

Speaking on the All-In Podcast on Friday, Undersecretary of Defense for Research and Engineering Emil Michael said officials were “scared” that Anthropic could restrict access to its AI models during the national security crisis.

Michael said the dispute intensified when Anthropic CEO Dario Amodei suggested Pentagon officials could call the company for exceptions if certain uses of its AI systems were needed.

Michael said such an approach would be impractical during fast-moving military scenarios, including situations tied to President Donald Trump’s Golden Dome missile defense initiative. Amodei said in a statement that he may challenge the decision in court. Anthronic is the first American company to be named a “supply chain risk.

Read also: Novo Nordisk To Partner With Hims & Hers To Sell Weight-Loss Drugs: Report

For updates and corrections, email newsroom[at]stocktwits[dot]com.

/filters:format(webp)https://news.stocktwits-cdn.com/large_doordash_resized_jpg_e2e74f3ef9.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/shivani_photo_jpg_dd6e01afa4.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/large_Getty_Images_2232147543_jpg_a7d6168b5c.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/Aashika_Suresh_Profile_Picture_jpg_2acd6f446c.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/large_supermicro_resized_jpg_95d12828d5.webp)

/filters:format(webp)https://st-everywhere-cms-prod.s3.us-east-1.amazonaws.com/IMG_9209_1_d9c1acde92.jpeg)

/filters:format(webp)https://news.stocktwits-cdn.com/large_novaxovid_novavax_resized_jpg_3a4b0527ae.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/IMG_8805_JPG_6768aaedc3.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/large_nio_stock_jpg_770e12377f.webp)

/filters:format(webp)https://news.stocktwits-cdn.com/Getty_Images_2252956558_jpg_2dc0e5e537.webp)